AI Advertising Is Complicated

The Tradeoff of LLM Ads Isn’t Revenue—It’s Certainty

The trust we place in digital advice is now being quietly tested. Today, when AI platforms deliver recommendations with impeccable confidence and clarity, it’s increasingly difficult to discern where expertise ends and subtle persuasion begins. The introduction of sponsored suggestions—often nestled just beneath authoritative responses—further blurs the line between objective guidance and commercial influence.

This new ambiguity doesn’t necessarily mean the recommendations are wrong, but it does erode our certainty about why they’re right. What once felt like receiving wisdom now can carry the faint aftertaste of marketing. The distinction between advice and advertising, once a bright dividing line, could fade to almost to invisibility.

In the internet’s earlier days, we built up mental defenses—skipping banners, scrolling past sponsored posts, and learning to read between the lines of ranked search results. But conversational AI disrupts that model entirely. When a system presents itself as a thoughtful expert—reasoning, explaining, even anticipating our objections—we instinctively lower our guard, treating it less like a billboard and more like a trusted advisor. The incentives haven’t disappeared. They’ve just become foggy.

That uncertainty—the permanent, structural inability to fully trust an AI answer once advertising enters the picture—is the real story of OpenAI’s 2026 ad rollout. Not the revenue mechanics. Not the conversion funnel. The potential corrosion.

On February 9th, OpenAI began rolling “Sponsored Suggestions” into ChatGPT’s free tier. The format is deliberately lightweight: contextually relevant prompts appearing below the AI’s response, clearly labeled, affecting nothing in the actual answer. Paid subscribers—Plus at $20/month, Pro at $200/month—see nothing. The message is unmistakable: the ads are not the product. The discomfort is.

This is the revenue paradox. To grow subscriber revenue, you first must degrade the free experience enough that paying becomes rational—but not so much that you destroy the trust that makes the product worth paying for. It’s a calibration problem that content platforms have solved before. The question is whether LLMs are a fundamentally different kind of product, one where the degradation calculus doesn’t work the same way.

I. What Made This Inevitable

In terms of customers, ChatGPT is one of the largest consumer software products ever built. By early 2026, usage was widely reported in the hundreds of millions of weekly active users, likely approaching a billion globally. Only a small fraction pay. At this scale, single digit conversion rates aren’t a failure—they’re normal.

Let’s assume roughly 5% subscribe. That leaves the vast majority using a product that is expensive to operate and free at the point of use.

Those costs are no secret. Frontier AI requires massive, ongoing investment in compute, specialized chips, data centers, and energy. OpenAI and its partners have been explicit about the capital intensity involved. This is not software with near zero marginal cost. It looks more like infrastructure.

Revenue has grown quickly—into the tens of billions of dollars on an annualized basis by early 2026—but expenses have grown with it. Even optimistic projections imply years of heavy spending before margins resemble those of mature software companies.

At that point, the arithmetic stops being theoretical. If fewer than one in ten users will pay directly, the remaining nine must be monetized indirectly. There are many ideas, but only one mechanism that has ever worked at global consumer scale.

Advertising.

Not banners or pop ups, but native, decision-adjacent advertising—the kind that appears precisely when a user is asking for advice or forming a preference.

This logic is familiar. It shaped search engines and social networks before it. What’s new is the interface. AI doesn’t present options; it presents an answer. A synthesized judgment that feels earned rather than placed.

The prices are public. The incentives are obvious. The history is clear.

Advertising inside AI systems isn’t a deviation from the model. It’s the condition for the model’s survival. And once you see that, the trust problem becomes unavoidable.

Advertising is the most visible lever, but it isn’t the only one. Capability throttling—slower response times, tighter rate limits, reduced context windows, or model downgrades for free users—is a quieter form of the same pressure. OpenAI already tiers model access between free and Plus; the question is how far that gap widens. Throttling has a meaningful advantage over advertising: it degrades the experience without contaminating the answer. A slower response doesn’t make you wonder whether the recommendation was bought. That distinction matters, and it shapes how aggressively each lever can be pulled.

II. Why the Spotify Comparison Is Imperfect

A helpful comparison for this analysis is Spotify, and it’s useful—sort of.

Spotify operates a free tier with ads and a paid tier without them. As of 2024, it had 268 million paying subscribers out of 678 million monthly active users—a 40% conversion rate that is, by any measure, extraordinary. The industry average for freemium products is 2% to 5%. Spotify operates at 8x that baseline.

How? The friction is calibrated. Free users get all the music, interrupted by ads between songs. Skip limits create mild frustration. The premium value proposition—no ads, offline listening, unlimited skips—is clear and tangible. Spotify’s CFO described the ad-supported tier not as a revenue line but as a conversion funnel: 60% of premium subscribers were free users first.

Netflix followed a similar logic in 2022, introducing an ad-supported tier after years of resistance. Subscriber counts at that tier doubled within a year. The pattern is consistent: strategic friction, when well-calibrated, converts.

But the Spotify comparison breaks at a critical point: ad adjacency.

When Spotify plays an ad between songs, you never wonder whether the song itself was chosen by an advertiser. The content is separate from the commerce. You might dislike the interruption, but the song, the playlist, the recommendation—these are uncontaminated. The trust relationship between user and platform is orthogonal to advertising.

With an LLM, that separation doesn’t exist. The answer is the content. And once you’ve seen a sponsored suggestion adjacent to that answer, you can never unsee it.

OpenAI has named this “Answer Independence” and made it a stated principle of their advertising approach. The fact that they felt the need to name it tells you everything about the underlying vulnerability.

Perplexity learned this the hard way. Their foray into advertising—sponsored follow-up questions inside a search AI interface—failed quickly. The issue wasn’t revenue mechanics; it was perception. In a chat interface, ads felt like bias. Users couldn’t distinguish between a suggested question that was organically useful and one that was paid for. The product was contaminated by proximity, not by actual manipulation.

The experiment was pulled. The lesson remains.

III. What This Means For Customers

If you use ChatGPT for medical questions, financial advice, product comparisons, career decisions, or any query where the answer matters—you now have a new variable to factor in. Not “is this answer correct?” but “is this answer influenced?”

That’s not a question Spotify ever forced you to ask about a playlist. It’s not a question Netflix forced you to ask about a recommendation. It’s a question that only arises when the product is synthesis, guidance, judgment—when the AI isn’t just serving content but shaping how you think about a decision.

The discomfort is real. It’s rational. Here’s why:

The architecture of influence is invisible. When a search engine shows you a sponsored link, the sponsorship is spatially separate from the organic results. You can see the boundary. In an LLM conversation, there is no boundary between “the AI’s actual reasoning” and “the AI’s commercial context.” The model’s weights, the training data, the RLHF tuning, the system prompt—these are all upstream of any ad product, but they’re also upstream of everything else. The question “was this answer influenced?” doesn’t have a clean answer because the answer depends on where you draw the line.

Disclosure doesn’t solve the problem. OpenAI’s “Sponsored Suggestions” are clearly labeled. They appear below the response. They’re easy to distinguish from the actual output. The challenge is not knowing what you don’t know. The ad you can see isn’t the concern. It;s whether the relationship that produced the ad also shaped the answer you can’t see being shaped.

Trust degrades asymmetrically. Once you’ve wondered whether an AI answer was influenced, you’ll wonder again. The risk is that uncertainty compounds. LLMs just made that conflict ambient.

IV. The Pendulum Model

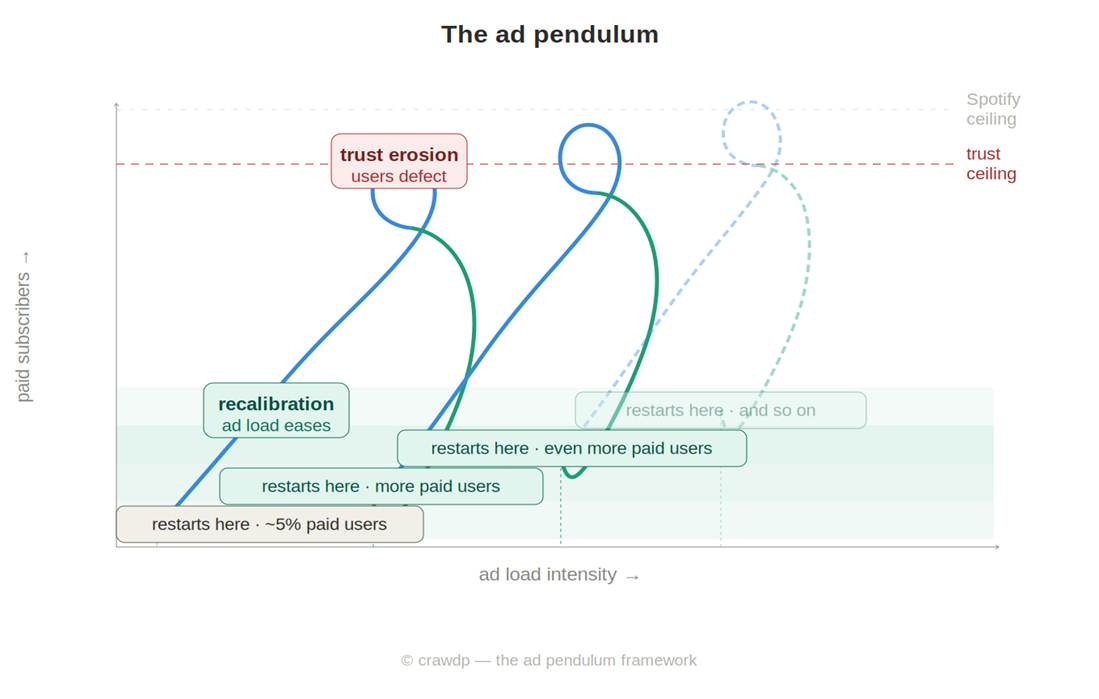

I don’t believe LLM ad intensity is a stable equilibrium. It’s more like a managed oscillation; a pendulum that will swing deliberately between heavier and lighter ad loads based on where a platform is in its subscriber growth cycle.

The pendulum has four phases:

Phase 1—Ad Introduction. Ads enter the free tier at low intensity. Friction increases marginally. Some users convert to paid to escape the disruption. Ad revenue begins monetizing the non-paying majority. The dual revenue stream emerges.

Phase 2—Ad Escalation. Financial pressure (ongoing losses, investor expectations) and slowing organic growth push ad load higher. Conversion accelerates as the free experience degrades further. This is the intended mechanism—the ads are doing their job. Throttling often does the earlier, cleaner work here—degrading the experience without the trust exposure that ad adjacency carries. Ads likely enter when throttling alone hasn’t moved enough free users to paid.

Phase 3—Trust Erosion. Heavy ad adjacency to AI answers begins to corrode the core value proposition. Users start to question answer independence. Some defect to cleaner alternatives. This is the LLM-specific failure mode—one that Spotify and Netflix, with their content-ad separation, largely avoided.

Phase 4—Recalibration. The platform dials back ad load to restore trust. The free experience improves. The cycle restarts—but at a higher baseline subscriber count than before.

Each swing of the pendulum converts another cohort. The net result, over time, is a subscription base that looks nothing like today’s 5%.

The constraint is that the trust cost per ad impression is higher for an AI assistant than for a music streaming service. That elevated cost means the pendulum’s effective range is narrower. OpenAI can’t run the ad load that Spotify runs. The recalibration trigger fires earlier. The ceiling is most likely lower.

Platforms that can operate with a lower trust cost per ad—either because their formats are more clearly separated from their answers, or because their users have been conditioned to accept commercial adjacency—will have a wider pendulum range. Google, with decades of training users to tolerate sponsored results alongside organic answers, may have a structural advantage here that no one fully appreciates.

V. Claude’s Bet

Anthropic’s choice to remain ad-free is not an accident, and it is not primarily a values statement. It is likely a business strategy predicated on a specific belief: that trust is worth more than reach.

By early 2026, Anthropic had reached approximately $19 billion in annualized revenue—up from roughly $4 billion in mid-2025. That trajectory is driven almost entirely by enterprise contracts: large cloud partnerships with Google and Amazon, API integrations with enterprise software vendors, and direct enterprise deals where the sales proposition centers on trust and reliability.

Ads would compromise that positioning directly. Not because enterprise customers would see ads—they wouldn’t—but because the mere existence of an ad-supported consumer tier changes how a company is perceived. In enterprise technology sales, trust is a vendor-level attribute, not a product-tier attribute. If Claude has ads, Claude is an ad-supported AI company, full stop. The enterprise buyer’s risk calculus shifts.

The Anthropic bet is that the enterprise AI market—where contracts are larger, churn is lower, and the product genuinely needs to be trusted—is worth more, structurally, than the consumer advertising market. This makes sense for the company for now. The question is whether it remains correct as OpenAI’s subscriber base grows and its enterprise offering matures.

VI. A Key Question

In 2024 Sam Altman called advertising a “last resort” - arguing it “fundamentally misaligns user incentives with the company providing the service.” He wasn’t wrong. He just ran out of runway.

The architectural question—whether answer independence can be maintained at scale, and whether users will believe it—is the one that determines whether the pendulum model works at all. If users come to believe that ChatGPT’s answers are shaped by commercial relationships, the trust cost per ad impression rises toward infinity. The conversion mechanism collapses. The free tier becomes worthless, the paid tier loses its value signal, and the whole model unravels.

That outcome is not inevitable. Bing has operated sponsored results alongside AI answers since 2023 without obvious trust collapse. On a much larger scale, Google has conditioned a generation to accept commercial adjacency in search. The precedents for coexistence exist.

But LLMs are categorically different from search in one important way: they synthesize, they recommend, they guide decisions. A sponsored link in a search result is easy to ignore. A sponsored suggestion in a multi-turn conversation about which vendor to choose, which doctor to see, or which investment to make is something else entirely. The stakes of bias are higher, and the mechanisms of bias are harder to see.

OpenAI’s answer—sponsored suggestions below the response, never inside it, clearly labeled, no influence on answers—is a reasonable first attempt. Whether it survives contact with growth targets, advertiser pressure, and a user base that has every reason to be skeptical is the question that will define the next three years of AI monetization.

What to Watch

Four signals will tell you whether the pendulum model is working as intended or breaking down:

1. Paid conversion rate trajectory. If ads are functioning as the intended conversion engine, ChatGPT’s paid subscriber count should accelerate meaningfully in 2026.

2. Ad load escalation vs. reversion. The pendulum theory predicts that ad intensity will not hold steady. Watch for either a quiet dialing-up of frequency and placement, or a public announcement of ad pullback framed as a “user experience” improvement. Both are data points about where OpenAI believes it is in the cycle.

3. Enterprise perception of Claude vs. ChatGPT. If Anthropic’s ad-free positioning is functioning as a competitive differentiator, it should show up in enterprise win rates and contract size over the next 12–18 months. That data won’t be public, but it will be visible in revenue trajectories and in how enterprise buyers talk about the choice.

4. Free tier capability gaps. Watch for quiet reductions in what free users can do—model access, message limits, context length. If the gap between free and paid widens without a corresponding ad pullback, it signals OpenAI is rebalancing toward throttling as the primary conversion mechanism. That would be the healthier outcome for long-term trust.

Any AI that wants to be both free and trusted eventually hits the same wall: monetization introduces incentives, and incentives corrode certainty. Anthropic is betting that trust is worth more than reach. OpenAI is betting that trust can survive careful commercialization. Both bets make sense. Only one scales without breaking the product. If AI is going to help people decide what to buy, who to trust, or what to believe, then advertising isn’t just a revenue strategy—it’s a ceiling.

Crawford Del Prete is a Senior Advisor at PSG Equity and former President of IDC. He writes about enterprise software, AI, and technology market dynamics. All opinions are his.